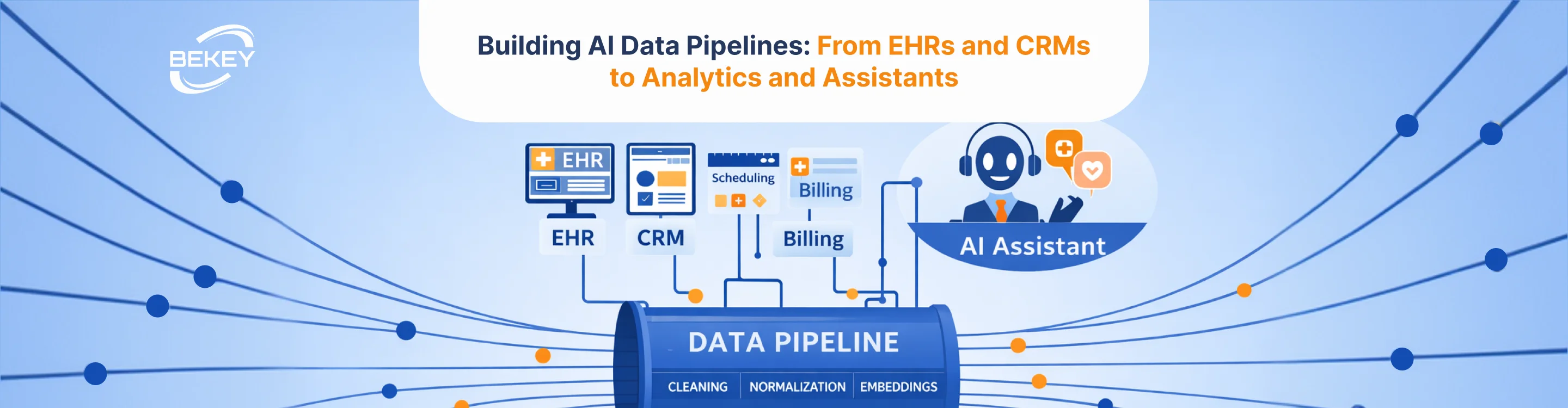

Building AI Data Pipelines: From EHRs and CRMs to Analytics and Assistants

- Why AI Systems Depend on Data Pipeline Architecture

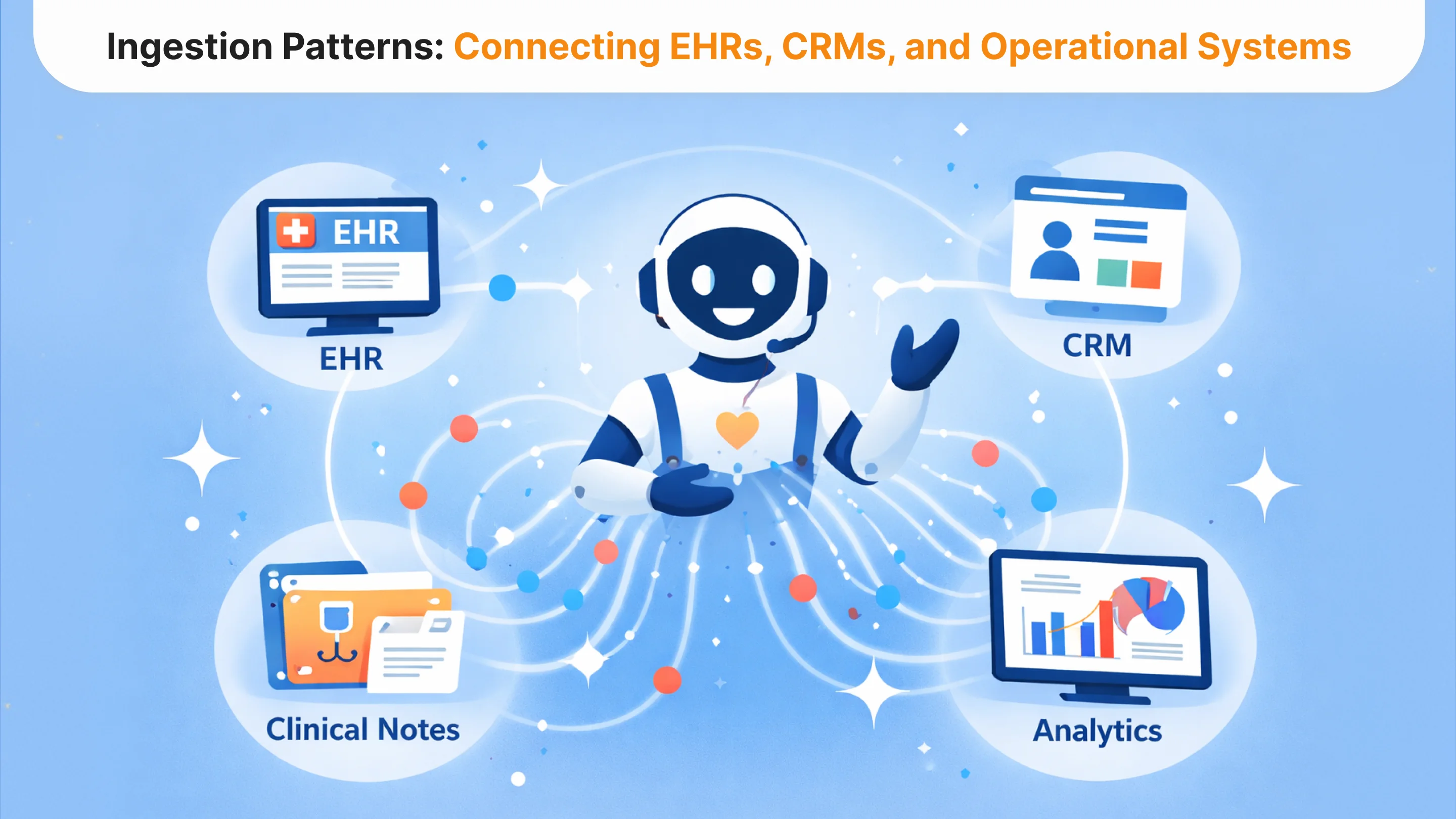

- Ingestion Patterns: Connecting EHRs, CRMs, and Operational Systems

- Data Quality: The Hidden Constraint in Healthcare AI

- Embeddings: Turning Unstructured Records into AI-Ready Data

- Monitoring AI Data Pipelines in Production

- The Infrastructure Behind Scalable Healthcare AI

In many healthcare AI initiatives, the conversation starts with models. Teams evaluate large language models, explore analytics capabilities, and design assistants that promise to simplify clinical or operational workflows.

But the real bottleneck usually appears much earlier.

AI systems depend on data pipelines that can reliably move information from operational systems into analytics environments, automation workflows, and AI applications. In healthcare organizations, those systems often include electronic health records, CRMs, scheduling platforms, revenue cycle tools, and a growing number of external data sources. Each system stores data differently, updates at different speeds, and follows different governance rules.

Without a coherent data pipeline architecture, even sophisticated AI models struggle to produce useful outputs.

Healthcare AI initiatives, therefore, depend less on model selection than on how data is collected, transformed, and monitored before it reaches the model layer. Inconsistent ingestion patterns, incomplete records, and poorly structured datasets can quietly undermine analytics, automation, and assistant workflows. What appears to be a model performance issue is often a data pipeline issue.

For CTOs and data leaders, building reliable AI data pipelines means addressing several interconnected challenges: integrating data from EHRs and CRMs, maintaining data quality across systems, generating embeddings for unstructured information, and monitoring pipelines as AI applications scale.

This article examines how healthcare organizations can design AI data pipelines that support analytics, operational automation, and AI assistants. Rather than focusing on isolated tools, we look at the architecture that allows data to move consistently from operational systems into production AI workflows.

Why AI Systems Depend on Data Pipeline Architecture

The Limiting Factor Is Data

Healthcare AI systems rely on continuous flows of structured and unstructured data. Clinical records, scheduling information, patient communication, revenue cycle data, and operational metrics must move from source systems into analytics environments and AI services.

If that flow is incomplete or inconsistent, AI systems cannot reliably support analytics, automation, or assistant workflows.

Fragmented Healthcare Data

Healthcare organizations operate across multiple specialized systems:

- EHR platforms store clinical records and encounter data

- CRMs capture patient engagement and communication

- Scheduling systems manage appointments and capacity

- Revenue cycle tools generate billing and claims data

These systems were designed for operational tasks, not for AI analytics. As a result, data required for AI applications is often fragmented across platforms.

Building effective AI data pipelines means designing an architecture that unifies these sources while preserving governance controls and operational reliability.

When the data foundation is weak, AI projects remain experimental. When it is strong, organizations can support analytics, automation, and AI assistants at scale.

Ingestion Patterns: Connecting EHRs, CRMs, and Operational Systems

Designing Reliable Data Ingestion

The first step in building AI data pipelines is establishing reliable ingestion patterns. Healthcare organizations must determine how data moves from operational systems into analytics platforms and AI services without disrupting core workflows.

In practice, this means integrating multiple types of systems. EHRs generate clinical records and encounter documentation. CRMs capture patient communication. Operational systems such as scheduling platforms and revenue cycle tools produce high-volume transactional data.

APIs, Batch Pipelines, and Hybrid Models

These systems rarely share a unified data model. Some expose modern APIs that support real-time data access. Others rely on batch exports or integration layers that synchronize data periodically.

Designing ingestion pipelines, therefore, requires balancing:

- Data freshness requirements

- System reliability

- Healthcare governance constraints

Real-time ingestion may be necessary for operational automation, while analytics pipelines can often rely on periodic synchronization.

The goal is not simply moving data, but ensuring that downstream AI systems receive consistent, validated inputs.

Data Quality: The Hidden Constraint in Healthcare AI

Once ingestion pipelines are established, the next challenge quickly becomes visible: data quality.

Healthcare systems generate enormous volumes of information, but that data is rarely clean or standardized. Clinical notes contain unstructured text. Coding conventions vary across departments. Fields that appear identical across systems may represent different concepts or follow different formatting rules. Even small inconsistencies can cascade through analytics pipelines and AI applications.

For AI systems, these issues are not minor technical inconveniences. They directly affect model performance and reliability.

Predictive analytics models rely on consistent historical data to produce meaningful signals. AI assistants depend on structured context to generate accurate responses. Automation workflows require clear event triggers and validated inputs. When data is incomplete, duplicated, or inconsistently labeled, these systems behave unpredictably.

Healthcare AI pipelines, therefore, require a deliberate data normalization layer. This layer is responsible for cleaning, validating, and harmonizing incoming data before it enters downstream analytics or AI services. It may involve resolving duplicate patient identifiers, standardizing terminology across systems, validating field formats, and enriching records with missing metadata.

In practice, maintaining data quality is not a one-time effort. As new systems are introduced and workflows evolve, the pipeline must continuously monitor incoming data and detect anomalies. Without this monitoring, small inconsistencies can quietly propagate into analytics dashboards, automation workflows, and AI assistants.

For CTOs and data leaders, the reliability of AI initiatives often depends less on model selection than on the discipline applied to data quality management.

Embeddings: Turning Unstructured Records into AI-Ready Data

Unlocking Clinical Documentation

A large portion of healthcare data exists in unstructured form. Clinical notes, referral letters, discharge summaries, and patient messages contain valuable information that is difficult to analyze using traditional databases.

Embeddings make this information usable for AI systems.

In an AI data pipeline, embeddings transform unstructured text into numerical representations that capture semantic meaning. Once embedded, documents can be indexed and retrieved based on conceptual similarity rather than simple keyword matching.

How Embeddings Power AI Systems

In healthcare environments, embeddings commonly support:

- AI assistants retrieving context from patient documentation

- analytics teams analyzing patterns in clinical notes

- automation systems classifying and routing documents

Managing Embedding Pipelines

Embedding pipelines introduces new architectural considerations. Organizations must determine how documents are segmented, how frequently embeddings are refreshed, and how vector databases scale as data volumes grow.

When documentation changes in the EHR, corresponding embeddings must be updated. Without synchronization, AI assistants and analytics systems may rely on outdated context.

Embeddings, therefore, act as a bridge between traditional healthcare records and modern AI applications.

Monitoring AI Data Pipelines in Production

Data Pipelines Are Operational Systems

AI data pipelines do not end once integration is complete. When AI systems move into production, pipelines become operational infrastructure that must be continuously monitored.

Without monitoring, pipelines can degrade silently. Source systems change schemas, APIs evolve, ingestion jobs fail, and data quality issues accumulate. These problems often appear first as model inconsistencies or assistant errors.

Pipeline Reliability and Data Freshness

Monitoring systems must track ingestion success rates, data latency, and dataset completeness. If pipelines fall behind or fail, analytics dashboards and AI assistants may operate on outdated information.

Detecting Schema Changes

Healthcare data environments evolve constantly. New fields appear, formats change, and workflows introduce new event types. Monitoring tools must detect schema drift early to prevent errors from propagating downstream.

Observability for AI Applications

As AI systems scale, observability must extend to how data is consumed. AI assistants, analytics models, and automation workflows depend on specific datasets and embeddings. Monitoring ensures these components remain synchronized and reliable.

For organizations deploying AI at scale, pipeline monitoring transforms data infrastructure from a static integration layer into a continuously managed system.

The Infrastructure Behind Scalable Healthcare AI

In healthcare AI conversations, attention often centers on models and applications. In practice, the systems that determine whether AI works at scale are much less visible.

AI assistants, analytics platforms, and automation workflows all depend on the same underlying foundation: reliable AI data pipelines. Without consistent ingestion from EHRs and CRMs, disciplined data quality management, embedding pipelines for unstructured records, and production monitoring, even well-designed AI systems struggle to deliver consistent results.

For CTOs and data leaders, building AI data pipelines is therefore not just a technical integration task. It is the infrastructure decision that determines whether AI initiatives remain isolated experiments or become part of everyday operations.

Organizations that invest in this foundation early gain a significant advantage. Once reliable pipelines are in place, new analytics models, automation workflows, and AI assistants can build on the same architecture rather than requiring new integrations for every use case. This dramatically accelerates the ability to scale AI across the organization.

If you are evaluating how to design or improve AI data pipelines across EHRs, CRMs, and analytics environments, our AI data architecture consulting services can help assess the ingestion patterns, data quality frameworks, and monitoring infrastructure required to support production AI systems.

Tell us about your project

Fill out the form or contact us

Tell us about your project

Thank you

Your submission is received and we will contact you soon

Follow us