Why Your Healthcare Business Needs to Be HIPAA Compliant

Healthcare and wellness are our main expertise, so the majority of our clients are from that field: they develop software for clinics, lifestyle and self-care apps for patients, solutions for clinical trials, and so on. Yet, only a few of them know they need to be HIPAA-compliant in order to get their software on the American market without getting fined by the federal Office of Civil Rights.

We decided to create a content series that will explore regulations that affect healthcare tech companies in different countries. This first part will be about HIPAA and will answer some of the most common questions related to the topic.

So, what is HIPAA? Why it was created?

The Health Insurance Portability and Accountability Act or HIPAA starts in 1996, and, as you can guess from the name, the act was included in federal law to regulate things related to insurance. In particular, the legislation allowed people to keep their health insurance coverage when changing jobs. With it, America started the era of information exchange and computerization in the industry.

Since then, HIPAA changed a lot, and one of its main functions has become the prevention of health information disclosure.

In 2015, Anthem — one of the largest health benefits companies in America reported the largest health data breach in American history: the company lost to hackers health info of almost 79 people. The cyberattack was performed through phishing emails — hackers sent them to an Anthem subsidiary; some of the employees opened the email — woof, and it happened. In 2018, Anthem paid $16 million settlement to OCR and promised to protect their systems and train their employees better.

The guide on how to do the latter is outlined in the HIPAA— and yet, considering the fact that healthcare loses more money to security breaches than other industries and reacts to them slower, there is a rather large room for improvement.

Should every business in healthcare be HIPAA compliant?

Answering the question first — no. HIPAA offers best security practices that are useful to employ in an organization, but not every business in the healthcare industry needs to implement them due to legislation.

HIPAA covers two types of businesses:

Covered entities who provide healthcare services (treatment and consultations — yes, psychologists too), cover healthcare payments and operations. That’s hospitals, clinics, private offices, insurers, pharmacies, Medicare, healthcare clearinghouses, etc. HHS defines the list as “Healthcare providers, Health Plans, Healthcare Clearing Houses, which is pretty easy to understand”)

And Business Associates — companies who work for covered entities or another business associate — for healthcare providers, insurers, pharmacies, etc. So, it’s us. If you’re asking people to put their drug schedule in the app, if you’re a software company that helps optimize hospitals’ EHRs, if you’re creating a small e-prescribing piece of code for them — you need to be HIPAA-compliant.

In other words, if you handle, store, or transmit protected health information (PHI), you need to be HIPAA-compliant.

So, PHI. Even if you don’t see it, it might be there. What is it?

Protected Health Information is data from a person’s medical records, including billing and any data from the patients’ insurance company system. So, lab results, phone records (if there’s a conversation between patient and therapist, or patient and nurse), and medication info are PHI. Anonymous data (that cannot be associated with users’ name, surname, social security number, and other data that may help to establish their identity) is not PHI.

To check, if you deal with PHI or you don’t, ask if you work (in any way) with information that relates to people’s health and is identifiable — and if you transmit it to a covered entity or business associate. If you use a cloud platform to store a person’s health info, you and the chosen platform needs to be HIPAA compliant. If you add your employees to health plans, you need to be HIPAA compliant.

What is “HIPAA compliant”?

Your business is HIPAA compliant if you are following HIPAA’s

Privacy Rule that tells you about the USA’s standards on when PHI may be used and disclosed. The bottom line of it is the definitions of covered entities and business associates — parties who may handle health information; and the declaration of everyone’s right on accessing and editing their health information, etc.

Security Rule that requires you to implement safeguards to protect the PHI and prevent leaks, breaches, and other cases of PHI disclosure. Which means, ePHIs you handle, store and transmit are not disclosed to unauthorized parties (people or processes), everything about one person’s health is kept in one place, and ePHI can be easily accessed on-demand by a patient/user it belongs too.

Breach Notification Rule that obliges you to notify affected people; HHS; and, in certain cases, media when a breach happened.

What are the risks of being not HIPAA compliant?

Well, first of all, risks are in fines and penalties. In 2018, HIPAA collected 28.7$ million from covered entities and their business associates (who, by the way, weren’t at risk before 2015).

Approximately, HIPAA breaches cost around $200 per victim (a person whose PHI was exposed.)

There are civil and criminal HIPAA penalties. OCR gives civil penalties to a covered entity or business associate if they broke the HIPAA regulations without harmful intent.

- If they didn’t know there was a HIPAA rule and violated it, the fine is $100/case, minimum.

- If they had reasons for not fixing the issue they knew about, the fine is at least $1,000.

- If they knew there was an issue, but then fixed it, the fine is $10,000/сase.

- If they knew there was an issue but didn’t fix it, the fine is at least $100,000/case of a violation.

Criminal penalties are given to those who intently discloses PHIs, and, depending on the nature of the violation (were PHIs disclosed for personal benefit? were data obtained under the false pretences?), they get fines in a minimum of $50,000 and go to jail.

Next big risk of disclosing patients’ information is compromising their safety.

A lot of healthcare organisations are still using legacy systems — but hacking as a profession becomes much, much more advanced. Cybercriminals love healthcare very much, because, as we’ve said, the industry’s security is not even close to good — as well as employees’ security training.

Hackers sell stolen medical records on the dark web where fraudsters can associate info in there with banking information— extensive background information in PHI, along with SSN and ID info allows them to successfully apply for credits and lands. They get medical supplies and sell them again…

It would be wrong to say HIPAA prevents absolutely all cases of cyberattacks and protects organisations from them, 100%. But it provides best practices to be as ready as possible for them.

So, yeah. Risks are significant even in the perimeter of law. Plus, security breaches disrupt the way people are receiving healthcare, harm hospital workers’ workflow. Consider the cost of business loses, damage to reputation, and long-term loathing in media. Even if you didn’t know HIPAA exists (after this article, we hope, it’s not the case), you still get fined. So it’s really much easier to think about safeguards from the start.

Most common causes of HIPAA violation and how to prevent them?

Organization-wide risks assessment and management failure

Usually such violations occur when someone steals devices with sensitive data and people don’t notice that: and, while encryption is only addressable— not obligatory— under HIPAA, the risk assessment is obligatory.

Risk assessment explains how to know and react in the case when a laptop with sensitive data was stolen or lost — and how to prevent that from happening.

The recommendations on risk analysis on HHS website state the following: “An organization should determine the most appropriate way to achieve compliance, taking into account the characteristics of the organization and its environment.”

Among the requirements, there is “accurate and throughout assessment of potential risks and vulnerabilities to the confidentiality, integrity, and availability of electronic protected health information.”

No matter what kind of risks assessment and analysis you decided to conduct, there are several elements that are essential for it.

- You have to determine the scope of analysis and document it. The scope must include all computers, networks, locations, portables, digital assistants and other devices that, in one way or another, connect to PHIs. All of it.

- Then, you need to identify where ePHIs are “stored, received, maintained or transmitted.” Do you use a cloud platform? Document it. E-subscribing service? Same. Everything needs to be documented. Actually, that’s a good one-size-fits-all HIPAA compliant hack.

Document everything.

- Identify potential risks and vulnerability in accordance with their environment and under different circumstances and threats. That includes stress and penetration tests, preparations for the emergencies, etc. Shake up your systems and see if anything falls. If it does, you’re not ready.

- Access security measures you have. There’s a list of security safeguards that need to be implemented or addressed for HIPAA compliant organizations. Check what you already have (and if the safeguards are used properly), check what you need to get ready. Document all.

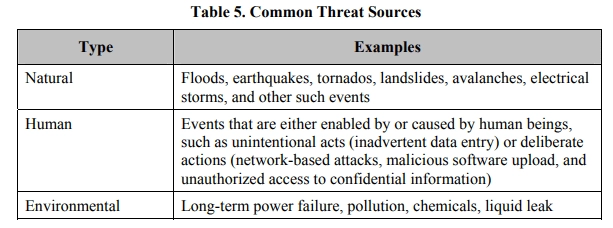

- Find out potential threats, their sources and impact. HSS site describes it as “likelihood of threat occurrence.” Know what attacks, leaks, and breaches — or perhaps natural disasters — your organization can anticipate — what they can exploit and what are the consequences of it.

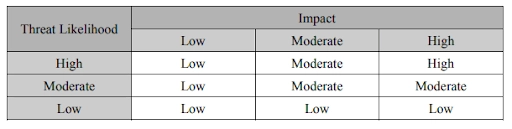

- Determine the risk level. “The risk level determination might be performed by assigning a risk level based on the average of the assigned likelihood and impact levels.” Then, document how to react on each of risks level.

Document all that. Keep it protected. Update, if anything changes: for instance if you plan to employ new technology or service, or use the services of other parties and so on.

The absence of encryption (or any other safeguard) for ePHIs

As we’ve already mentioned, encryption is not obligatory under HIPAA but it is an effective security measure. Encrypted data is considered as exposed only if the key to it was stolen. That’s why it cannot be neglected. Data should be protected both in rest (on a memory card or flash drive) and in-transit (when it goes from a flash drive to a computer.)

Snooping on the identifiable health information and poor access control

Zhou’s snooping started shortly after being notified that he would be soon dismissed. He was found to have accessed patient records, including those of celebrities, 323 times. Four of those 323 instances occurred after he officially left the hospital, and were thus federal misdemeanours. That is how Veriphyr, a software company that tracks impermissible use of PHI, describes the story of the first person who violated HIPAA and got in jail.

So, yeah, if your employees decided to look into Taylor Swift medical records, while they aren’t authorized to do so — or they are, but they already left the hospitals? Snooping is one of the most common HIPAA violations. You need to terminate access for employees who quit your organization and allow authorization only from the hospital-based devices. Session must be terminated after short periods of inactivity.

Human errors due to lack of training and culture

Training is an essential part of HIPAA compliance and risk assessment policies. Employees who, in one way or another, work with PHI, should know HIPAA law, — and that is the actual requirement for compliance.

A huge amount of violations happens due to human errors, and they are preventable. A training program must be throughout and well-documented, supported with examples and exercises. Imagine that working with PHIs is like getting a driver's license: people should pass exams, theoretical and practical — in understanding HIPAA law and how it applies to their everyday workflow.

Training must include information on proper disposal of health records, storing it in the secure location. Employees must know not to use personal or unknown flash drives in their desktop computer. Gossips and cool stories are good, but with no personal info of patients. There’s an actual post on Reddit discussing whether Snapchat post with a screenshot of a patient profile in the hospital electronic system is a HIPAA violation— and it is.

Of course, there could be software that prevents users from screenshotting/sharing patients’ information with other people, but it’s much easier to combine it with training that conveys the HIPAA ideas of patients’ privacy and safety that is connected to the confidentiality of their health data.

The final word about HIPAA

The interesting thing about HIPAA is that, in quite a lot of cases, legislation’s requirements are vague. For instance, risk analysis in organizations is a first step an organization has to take to be compliant to Security Rule. How is it conducted? What questions will OCR ask?

Some rules are clear: you have to inform affected people and OCR about the breach within 60 days after its discovery. Or not so clear: what if you suspect you have a breach but don’t know yet? So, a lot to think about.

One thing is certain: HIPAA violations can cost a lot for your patients and users and for your business. Hope the next parts of our Regulations in Healthcare series will help you get a better understanding of what to do.

Tell us about your project

Fill out the form or contact us

Tell us about your project

Thank you

Your submission is received and we will contact you soon

Follow us